Nowadays mankind projects its imagery mostly onto planes, as seen on most LCD Screens, canvases and Polaroids. A brief history of mapping imagery on anything different than a plane A better solution for “real 3D” video would be of course capturing a point cloud with as many as sensitive sensors as possible, filter it and construct mesh data out of it for rendering, but more on that later. Mapping the stereo video onto a sphere does not solve this, but at least it stores color information independent of view angle, so its way more immersive and gets described as telepresence experience. Stereoscopic video does not provide the full 3D information, since the perspective is always given for a certain view, or parallax. With it’s 1.6 release GStreamer added support for stereoscopic video, I didn’t test Side-By-Side stereo with that though.

Side-by-side stereoscopic video was becoming very popular, due to “3D” movies and screen gaining popularity. It used the headset only as a stereo viewer and didn’t provide any tracking, it was just a quick way to make any use of the DK1 with GStreamer at all.

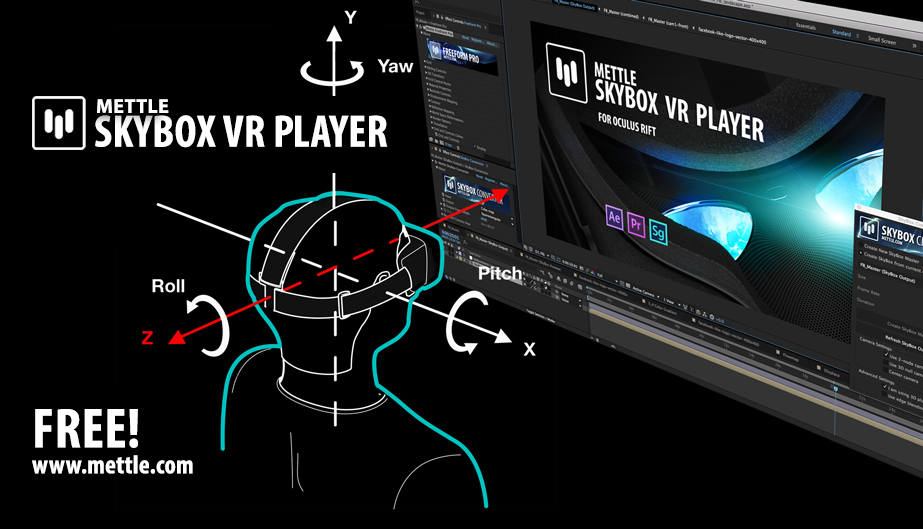

Three years ago in 2013 I released an OpenGL fragment shader you could use with the GstGLShader element to view Side-By-Side stereoscopical video on the Oculus Rift DK1 in GStreamer.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed